Through your eyes

A robot that mirrors how we interpret others through our own biased social lens

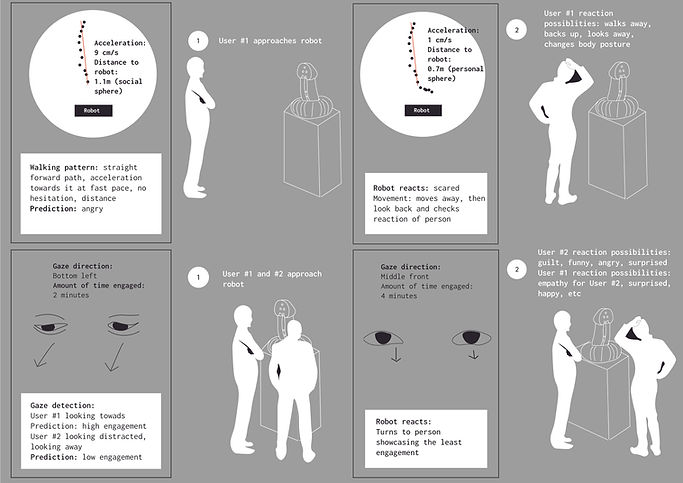

Meet a robot that reads social cues through radar and gaze tracking, reacting to perceived behavior. A live visualization reveals its reasoning, mirroring how cognitive biases shape interaction. This platform could explores human-robot interaction from multiple perspectives: first-person, third-person, each with different interpretations. Like our subjective views of others, there's no single objective truth. It invites reflection of how humans and machines construct meaning from ambiguous signals, and the invisible lenses through which we judge ourselves and others.

Project conducted for the Master Thesis

Duration: 5 months

Designer: Hanna Loschacoff

Technology:

-

ESP32 microcontroller + 24 GHz radar sensor (serial communication)

-

Servo motor control (physical shape-changing response)

-

Node.js WebSocket server (real-time browser ↔ hardware communication)

-

WebGazer.js (open-source browser-based gaze tracking)

-

HTML + JavaScript (React Framework, data processing & real-time visualization)

-

Local hosting via Live Server (VS Code)

-

Privacy: fully local, ephemeral, no data persistence

Final design

Final interaction loop

Final stand

Data pipeline

Process

Methodology: Research through Design

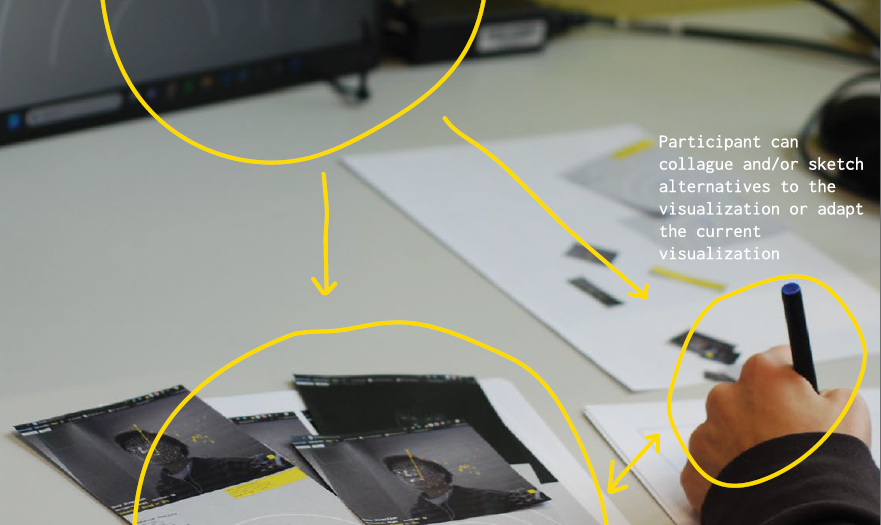

Making logs of integrating sensing (gaze and radar)

Designing live visualization

Ideating interaction loop

Interaction loop evolution

Designing and building the integrated aluminium stand

%20-%20Copy.png)

%20-%20Copy.png)

.png)

Form exploration

.png)